Seven RTX 5090 GPUs power AI server worth over $30,000 — over 4000W of power and 224GB of memory in a single frame

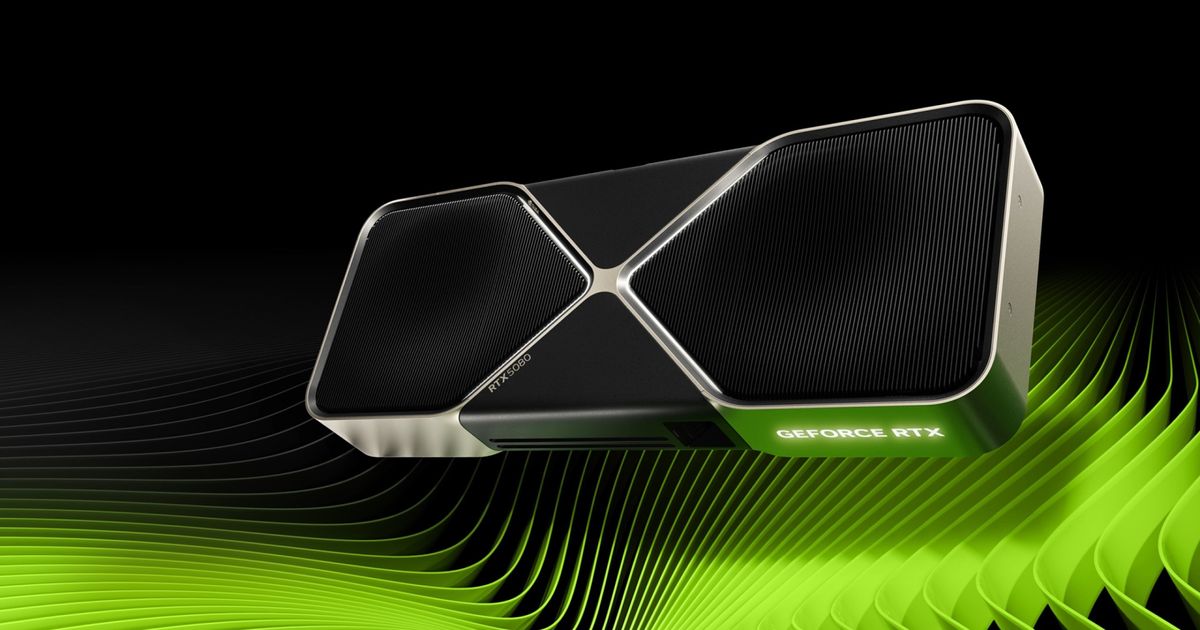

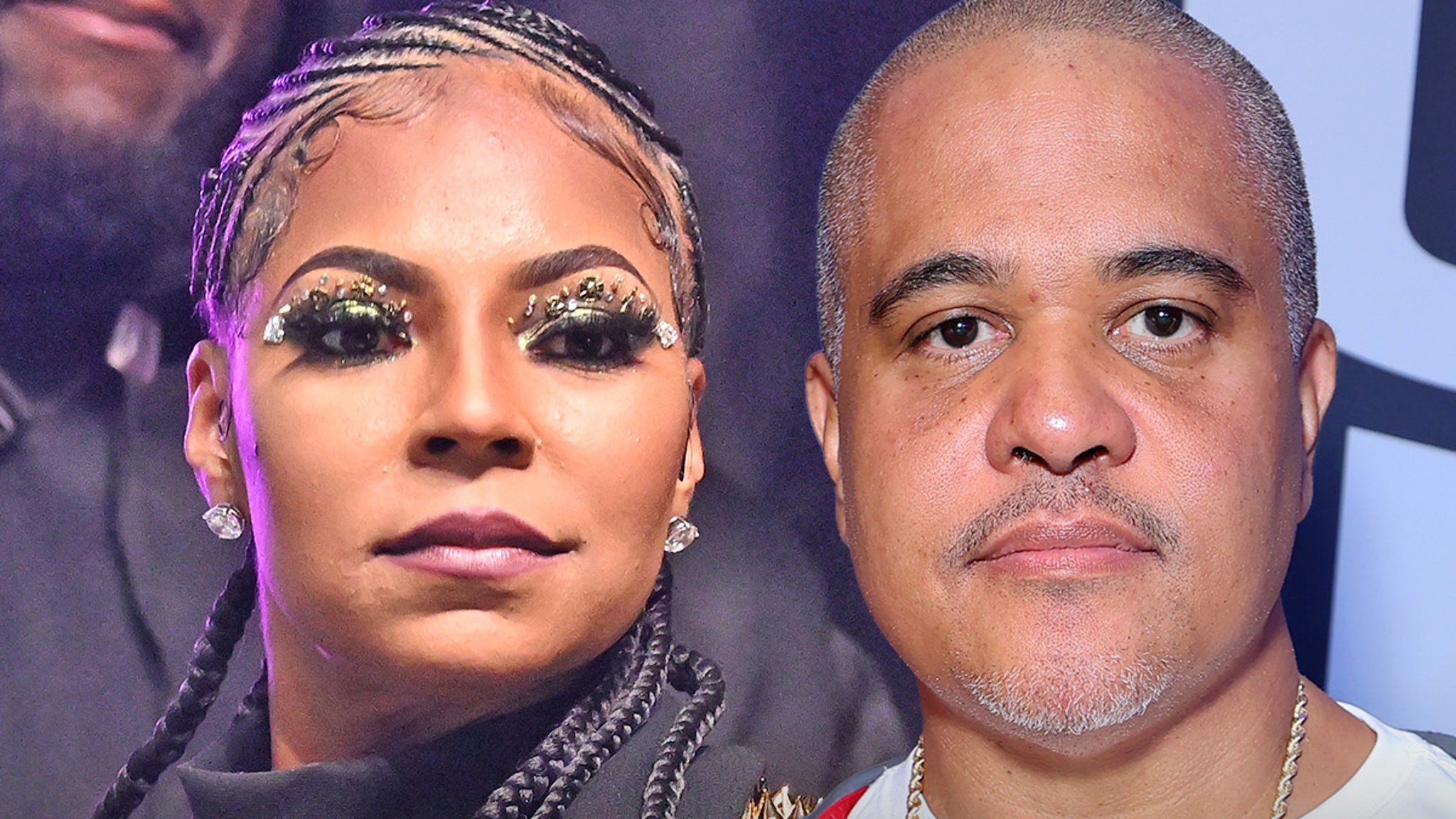

It's no secret that Nvidia's RTX 5090 is a powerhouse for AI workloads, given Blackwell's architectural improvements and support for the lower precision data formats. A Vietnamese shop, Nguyencongpc (via I_Leak_VN), recently shared a few photos of an AI rig they set up for a customer, featuring seven RTX 5090s hooked up to multiple kilowatt PSUs in an open-air GPU frame. Despite the average RTX 5090 costing nearly $4,000, professionals find their performance worth the investment, even though Nvidia is not positioning these cards as workstation solutions.

The elusive RTX 5090 has been off the shelves and out of reach for most of its lifespan unless you're willing to pay through the nose. This is mainly because Nvidia's profit margins are significantly higher on their server-focused Blackwell B100/B200/B300 GPUs than on typical GeForce products. For Nvidia, allocating the limited number of wafers it procures from TSMC toward these AI accelerators is simply more profitable.

The AI system resembles mining rigs from the pandemic era. The open-air GPU frame carries seven Gigabyte RTX 5090 Gaming OC units connected using what we can see are PCIe riser cables, powered by multiple Super Flower Leadex 2000W PSUs. The system can easily be valued at over $30,000, considering these GPUs go for $3,500-$4,000 on a good day.

This single frame offers a massive 224GB memory pool for AI training and inference. Of course, this isn't unified memory, so developers must rely on techniques like model parallelism to distribute workloads efficiently across multiple GPUs. On that note, Nvidia's forthcoming Blackwell workstation cards are outfitted with up to 96GB of VRAM and cost between $8,000 and $9,000.

Even though the RTX Pro 6000 Blackwell offers more memory, multiple RTX 5090s provide a performance advantage for AI tasks where raw compute is essential, especially on a limited budget. However, when price is not a concern and you wish to scale AI more effectively, more VRAM per individual GPU becomes essential to handle complex models with hundreds of billions of parameters.

With the advent of AI, Nvidia now differentiates its GPU offerings by memory capacities, which results in a steep price curve if you're looking for higher memory configurations. There have been workarounds by modders, where blower-style RTX 4090s with 48GB of memory are common in the Chinese community.

It might take months before prices normalize since practically every new GPU flies off the shelves instantly and is never seen at MSRP. You might want to check out the used market for last-generation GPUs, which can usually be found near their launch MSRPs or even lower.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0

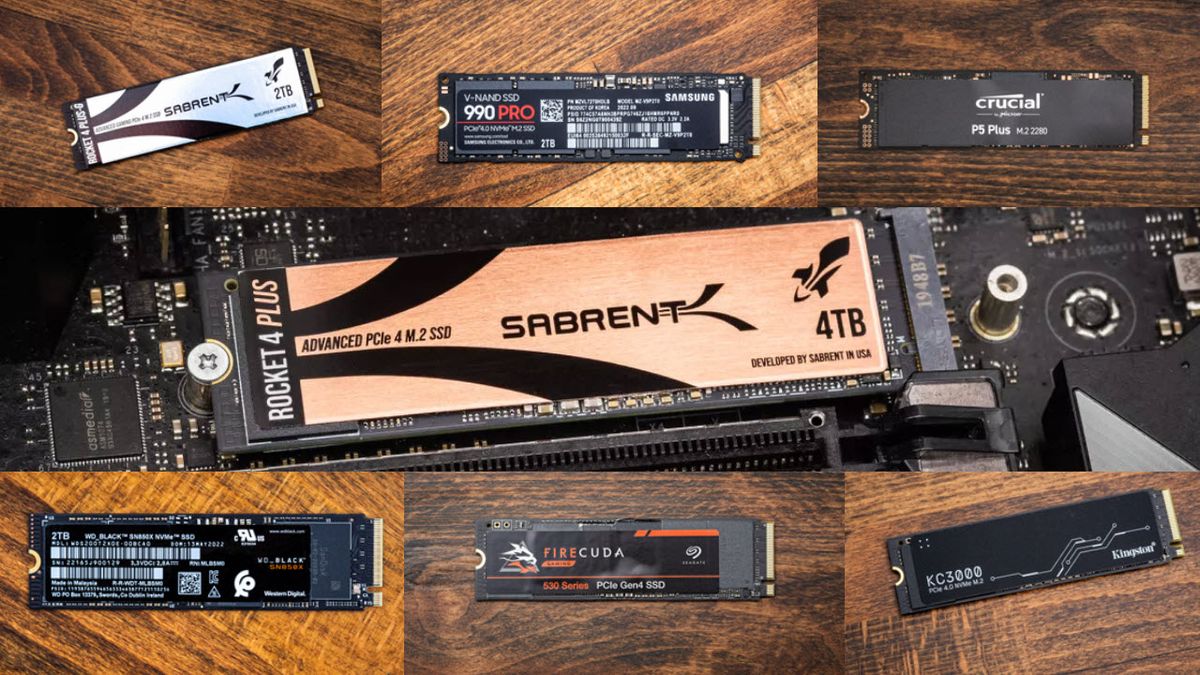

:quality(85):upscale()/2023/02/22/727/n/3019466/7139a92963f6429109d310.19360920_.jpg)

:quality(85):upscale()/2025/03/26/783/n/1922729/7cc10afb67e43e04a4a993.57627300_.png)

:quality(85):upscale()/2025/04/02/652/n/1922729/06eed55467ed4c2752b462.92913670_.png)

:quality(85):upscale()/2025/04/02/784/n/49352476/0e8cdda467ed789f1c55e2.54972444_.png)